What are the main benefits of containerization?

There are many benefits of containerization. Containerized apps can be readily delivered to users in a virtual workspace. More specifically, containerizing a microservices-based application, ADCs, or a database (among other possibilities) offers a broad spectrum of distinctive benefits, ranging from superior agility during software development to easier cost controls.

Containerization technology: More agile DevOps-oriented software development

Compared to VMs, containers are simpler to set up, whether a team is using a UNIX-like OS or Windows. The necessary developer tools are universal and easy to use, allowing for the quick development, packaging, and deployment of containerized applications across OSes. DevOps engineers and teams can (and do) leverage containerization technologies to accelerate their workflows.

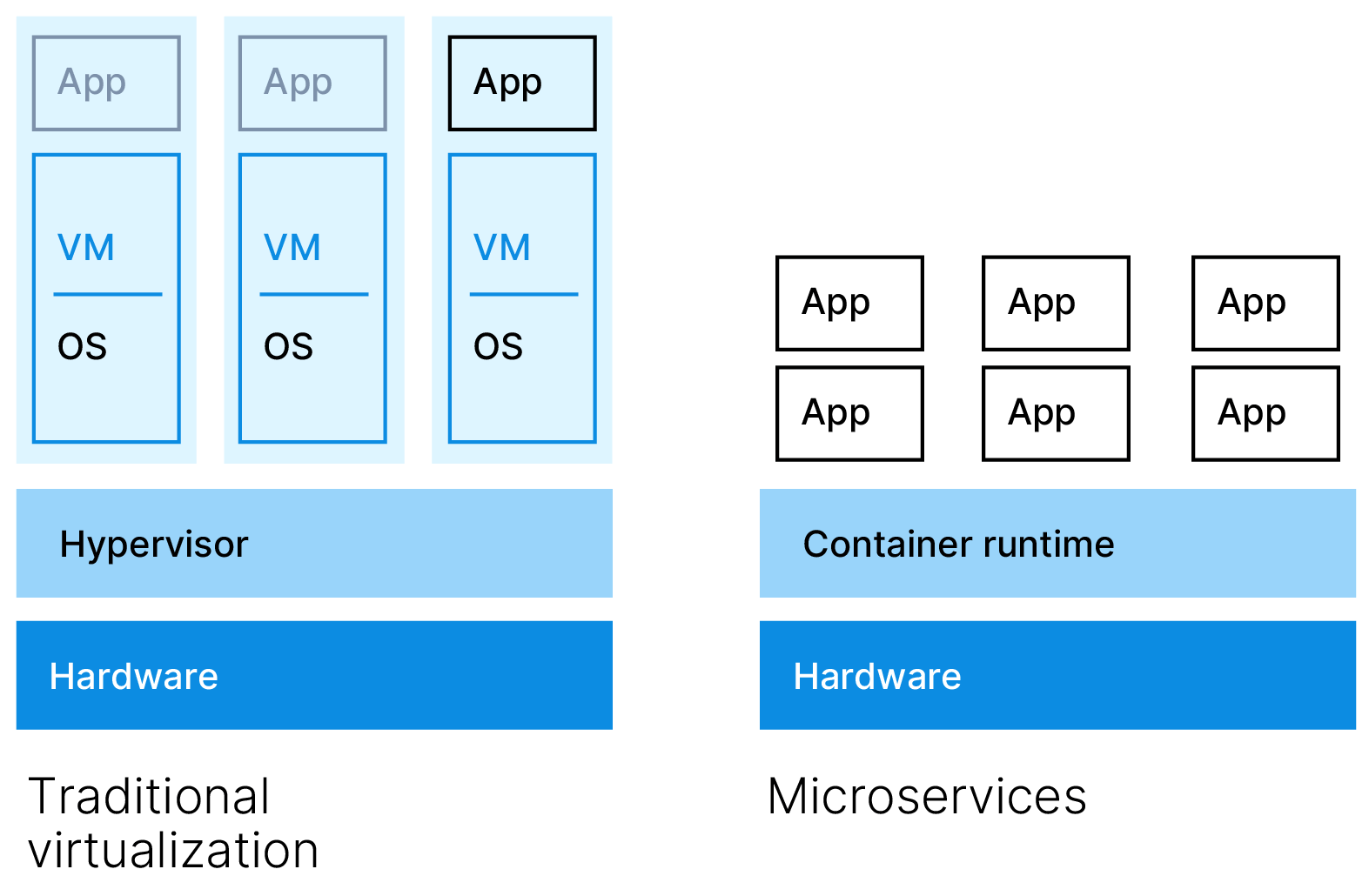

Less overhead and lower costs than virtual machines

A container doesn’t require a full guest OS or a hypervisor. That reduced overhead translates into more than just faster boot times, smaller memory footprints and generally better performance, though. It also helps trim costs, since organizations can reduce some of their server and licensing costs, which would have otherwise gone toward supporting a heavier deployment of multiple VMs. In this way, containers enable greater server efficiency and cost-effectiveness.

Fault isolation for applications and microservices

If one container fails, others sharing the OS kernel are not affected, thanks to the user space isolation between them. That benefits microservices-based applications, in which potentially many different components support a larger program. Microservices within specific containers can be repaired, redeployed, and scaled without causing downtime of the application.

Easier management through orchestration

Container orchestration via a solution such as Kubernetes platform makes it practical to manage containerized apps and services at scale. Using Kubernetes, it’s possible to automate rollouts and rollbacks, orchestrate storage systems, perform load balancing, and restart any failing containers. Kubernetes is compatible with many container engines including Docker and OCI-compliant ones.

Excellent portability across digital workspaces

Another one of the benefits of containerization is that containers make the ideal of “write once, run anywhere” a reality. Each container is abstracted from the host OS and runs the same in any location. As such, it can be written for one host environment and then ported and deployed to another, as long as the new host supports the container technologies and OSes in question. Linux containers account for a big share of all deployed containers and can be ported across different Linux-based OSes whether they’re on-premises or in the cloud. On Windows, Linux containers can be reliably run inside a Linux VM or through Hyper-V isolation. Such compatibility supports digital workspaces where numerous clouds, devices, and workflows intersect.